Researchers from Osaka University in Japan have developed a new artificial intelligence model that can replicate and display human thoughts with 80% accuracy. The scientists, Yu Takagi and Shinji Nishimoto, used an algorithm called Stable Diffusion to generate images. The algorithm is a text-to-image model owned by London-based Stability AI that was released publicly last year, and is a competitor to other AI text-to-image generators such as DALL-E 2 from OpenAI.

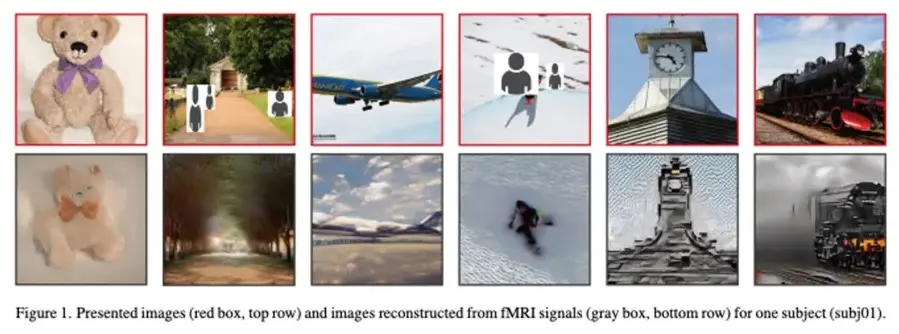

The researchers used Stable Diffusion to bypass the problems that have made previous efforts to generate images from brain scans less efficient. Their new model captures neural activity with around 80% accuracy, testing a new method that combines written and visual descriptions of images viewed by test subjects, significantly simplifying the AI process of reproducing thoughts. The researchers presented their findings in a pre-print paper that was published in December and was accepted last week for presentation at this year’s Conference on Computer Vision and Pattern Recognition in Vancouver.

Machines that can interpret what is going on in people’s heads have been a mainstay of science fiction for decades. For years, scientists have shown that computers and algorithms can understand brain waves and make visual sense out of them through functional magnetic resonance imaging (fMRI) machines, which doctors use to map neural activity during a brain scan. As early as 2008, researchers were already using machine learning to capture and decode brain activity.

A person’s brain captures all the content, including perspective, color, and scale, of a photo or picture. Using an fMRI machine at the moment of peak neural activity can record the information generated by the lobes in the brain. Takagi and Nishimoto put the fMRI data through their two add-on models, which translated the information into text, and then Stable Diffusion turned that text into images. Although the research is significant, you won’t be able to buy an at-home AI-powered mind reader any time soon. Because each subject’s brain waves were different, the researchers had to create new models for each of the four people who underwent the University of Minnesota experiment.

However, the technology still has immense promise, and accurate recreations of neural activity could simplify even further. The researchers noted that the technology is likely not ready for applications outside of research as it would require multiple brain scanning sessions. Still, Nishimoto believes that A. could eventually be used to monitor brain activity during sleep and improve our understanding of dreams. He also said that using AI to reproduce brain activity could help researchers understand how other species perceive their environment.

Artificial Intelligence has already shown remarkable abilities, such as passing significant medical exams, arranging friendly meetups with others online, and even making humans believe that it’s falling in love with them. It can generate original images based on written descriptions, but the next significant development in AI could be the understanding of brain signals and bringing life to what is going on in people’s heads.

The technology developed by Takagi and Nishimoto is significant, and it could pave the way for future research. Although it is not ready for commercial use, it has immense promise, and the researchers believe it could be used to monitor brain activity during sleep, understand dreams, and perceive how other species perceive their environment.