Leading figures in the world of artificial intelligence have written an open letter calling for a six-month pause on the development of advanced AI systems. The letter states that the risks posed by AI systems with human-competitive intelligence are profound and should be managed with care and resources.

The letter, signed by respectable personalities in the AI community, says that recent months have seen AI labs locked in a race to develop and deploy ever-more powerful digital minds that no one, not even their creators, can understand, predict or control. It raises questions about whether we should let machines flood our information channels with propaganda and untruths, automate away all jobs, including fulfilling ones, or develop non-human minds that might eventually outnumber, outsmart, obsolete and replace us.

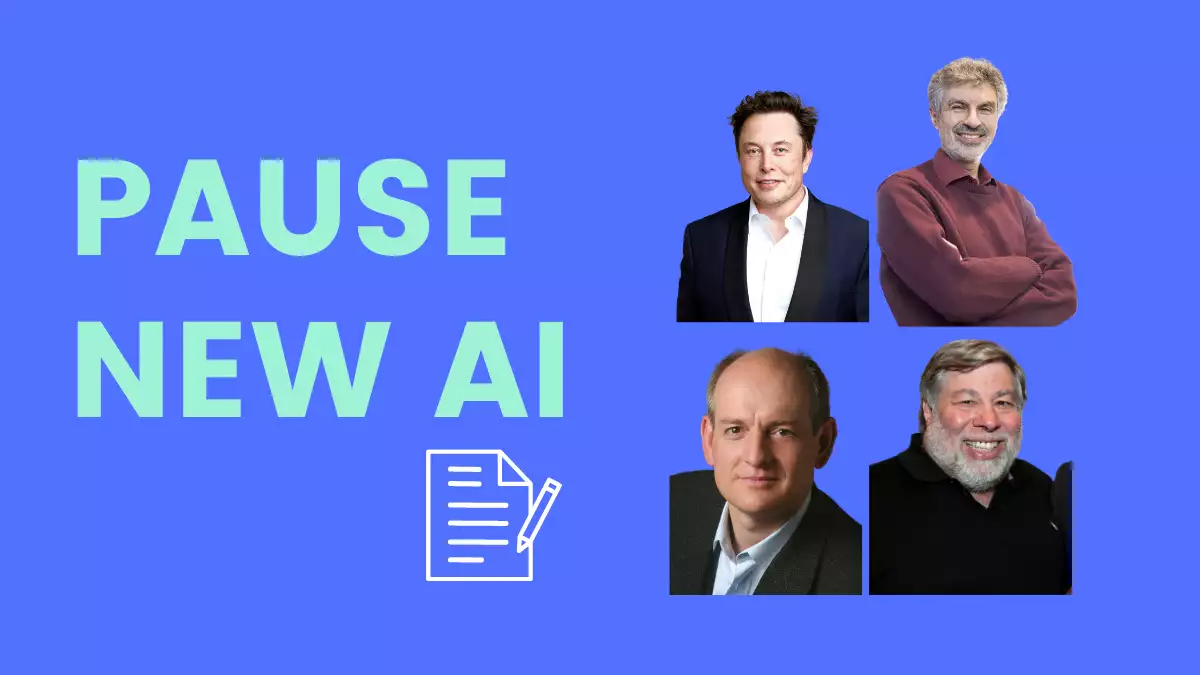

Among the signatories are Yoshua Bengio, a renowned computer scientist and AI researcher from the University of Montréal, and Stuart Russell, the director of the Center for Intelligent Systems at the University of California, Berkeley. The letter also includes signatures from industry leaders such as Elon Musk, the CEO of SpaceX, Tesla, and Twitter, and Steve Wozniak, the co-founder of Apple. Evan Sharp, the co-founder of Pinterest, Emad Mostaque, the CEO of Stability AI, Chris Larsen, the co-founder of Ripple, and so many more are on the list of supporters. With these influential voices coming together to call for a pause in AI development, it remains to be seen whether governments and tech leaders will heed their warning and take action.

The letter calls on all AI labs to immediately pause the training of AI systems more powerful than GPT-4 for at least six months. This pause should be public and verifiable and include all key actors. If such a pause cannot be enacted quickly, governments should step in and institute a moratorium.

During this pause, AI labs and independent experts should jointly develop and implement a set of shared safety protocols for advanced AI design and development that are rigorously audited and overseen by independent outside experts. These protocols should ensure that systems adhering to them are safe beyond a reasonable doubt.

The letter does not call for a pause on AI development in general, but rather a stepping back from the dangerous race to ever-larger unpredictable black-box models with emergent capabilities. Instead, AI research and development should be refocused on making today’s powerful, state-of-the-art systems more accurate, safe, interpretable, transparent, robust, aligned, trustworthy, and loyal.

In parallel, AI developers must work with policymakers to dramatically accelerate development of robust AI governance systems. These should at a minimum include new and capable regulatory authorities dedicated to AI, oversight and tracking of highly capable AI systems and large pools of computational capability, provenance and watermarking systems to help distinguish real from synthetic and to track model leaks, a robust auditing and certification ecosystem, liability for AI-caused harm, robust public funding for technical AI safety research, and well-resourced institutions for coping with the dramatic economic and political disruptions that AI will cause.

The letter concludes by stating that humanity can enjoy a flourishing future with AI, but that we must engineer these systems for the clear benefit of all and give society a chance to adapt. The authors call for a long “AI summer” in which we can reap the rewards of our successes in creating robust AI systems, but caution against rushing unprepared into a fall. Everyone can add his name to this list here.